Adventures in Machine Learning

How I taught a neural network to detect smokers and the lessons I've learned along the way

Late last year, I embarked on a journey to unravel the mysteries of Machine Learning from scratch. My background isn't in Computer Science, and I'm certainly not a coding wizard by any means. However, my knack for tinkering with code in creative projects has always been a part of me. With a burgeoning interest in AI, I thought, "Why not start with the basics of Machine Learning?" especially since it's a stepping stone to understanding the more complex world of AI, particularly Generative AI models (a subset of AI that excel at creating new content: text, images, audio, etc). And what better way to learn than by building my own simple(r) model? After all, there's no teacher like hands-on experience!

So here's the plan: I'm dividing this post into two main sections. The first part is all about the lessons I've picked up along the way – insights that I think are pretty universal, whether you're a technologist or just casually curious about AI. It's about the journey, the hiccups, and the eureka moments that transcend the techy bits. In the latter half, I'll share some technical insights, including a glimpse into the modeling process and a link to my Colab notebook. It's a straightforward look at the steps I took, complete with my code and a summary of the model's performance. Whether you're well-versed in ML or just starting out, I hope you'll find it both informative and approachable.

Let's dive in!

I. The takeways

Patience and Diligence

Building a model is like waiting for your favorite band to release a new album – it takes time and can test your patience. Juggling various hyperparameters (fancy term for settings that you adjust in machine learning) can be mind-boggling. There were times when I thought I had nailed it, but the results said otherwise. This is a common theme in the field, and it taught me to take a deep breath, maybe grab a coffee, and start over.

Documentation became my best friend. Over the years, I've maintained a habit of meticulously noting down every little detail of my experiments. This practice turned out to be a lifesaver. Imagine having a diary, but instead of 'Dear Diary, today I...', it's filled with 'Model 16: Adjusted the learning rate and batch size’. This helped me keep track of my progress and provided valuable insights into the quirks and features of my model.

Iterative Mindset

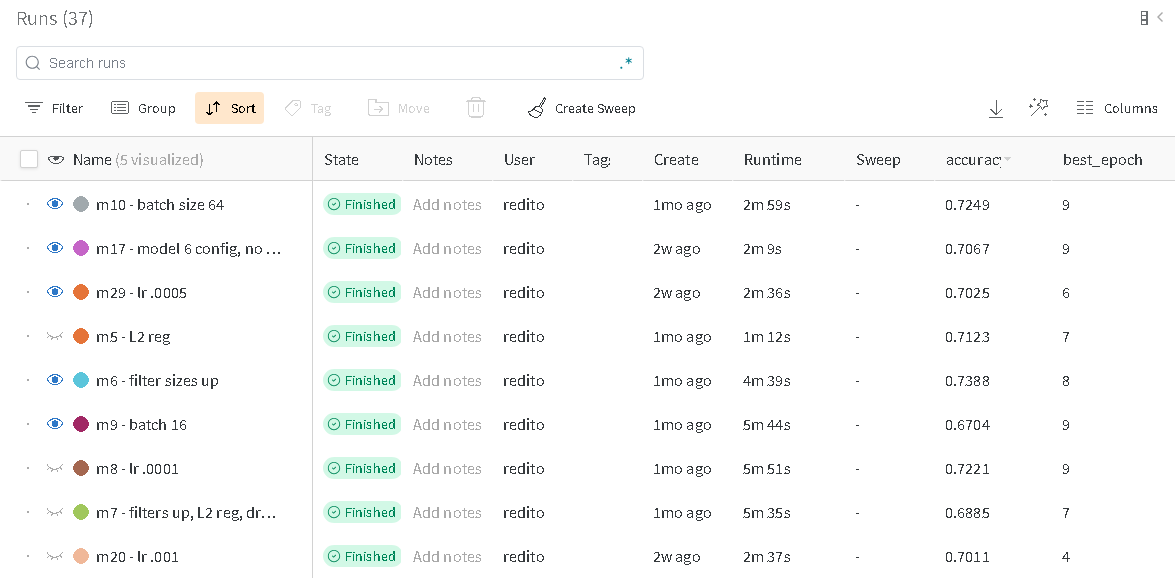

Remember how I mentioned starting simple? Well, I kind of threw that out the window and built over 30 different models. It was a marathon of tweaking one parameter, training, evaluating, then going back to the drawing board. Some changes were like finding a golden ticket, while others... not so much. But every tweak was a step towards a better understanding of what works and what doesn't.

Embracing Randomness

Machine Learning models have a bit of a 'roll the dice' aspect to them. The training process involves a good deal of randomness, from the initial setup of the model to the way it learns during training. It's like trying to predict the weather – you have a general idea, but sometimes it just decides to rain on your parade. This unpredictability, frustrating as it may be, is also what makes machine learning both challenging and exciting.

And just to clarify, when I say 'random', it's not the same as chaos. There's method to the madness, but it's not as straightforward as traditional software development.

Time-Consuming? Absolutely!

I'm usually good at time management, but ML had other plans. What I thought would be a quick one-hour task often turned into a multi-hour saga. It's like binge-watching a series; you think, 'Just one more episode,' and suddenly it's 2 AM. But, as I get more hands-on experience, I'm learning to better estimate the time needed for these projects and streamline my workflow.

Building Intuition

Diving into the world of model building is like opening a Pandora's box of complexity. The number of parameters can skyrocket, and tweaking them is more art than science. Initially, these models might seem like mysterious 'black boxes', but as you get cozy with the underlying math, code, and hands-on practice, you start developing an intuition. It's like learning to cook – at first, you're strictly following recipes, but over time, you start experimenting and trusting your gut. And that's when the real magic happens.

Standing on the Shoulders of Giants

My adventure began with code from the excellent "Generative Deep Learning (2nd Edition)" textbook. This code was a fantastic springboard, saving me countless hours. The open-source tools I used (TensorFlow, NumPy, pandas, matplotlib, etc.) are the unsung heroes of my journey, making complex tasks more manageable. And let's not forget the invaluable assistance of ChatGPT for debugging – talk about a lifesaver!

II. The Model

As for my project, it was my first rodeo with a complete machine learning workflow, which, for the uninitiated, typically looks something like this:

Collect Data

Analyze and Preprocess Data

Build Model

Train Model

Evaluate

Iterate based on Results

Inference

The challenge I set for myself was quite intriguing: to create a model that could discern whether a person in a photo is smoking. Why this specific task, you might wonder? Well, image classification can cover a range of topics, like identifying if someone is wearing glasses. However, detecting smoking posed a more intricate challenge. It's something humans can usually identify at a glance, but for a machine, it's a whole different ball game. The machine needs to learn not just what a person looks like but also the subtler nuances that indicate smoking. Luckily, I found a curated smoker dataset on Kaggle, which was a great resource. It meant I didn't need to start from scratch in collecting and categorizing countless images.

For this task, I opted for a convolutional neural network (CNN). These networks are the bread and butter of image-related deep learning projects. Why a CNN? Well, since we're dealing with images, CNNs are ideal as they excel in capturing the spatial hierarchy in an image, which is basically saying "they're good at understanding pictures."

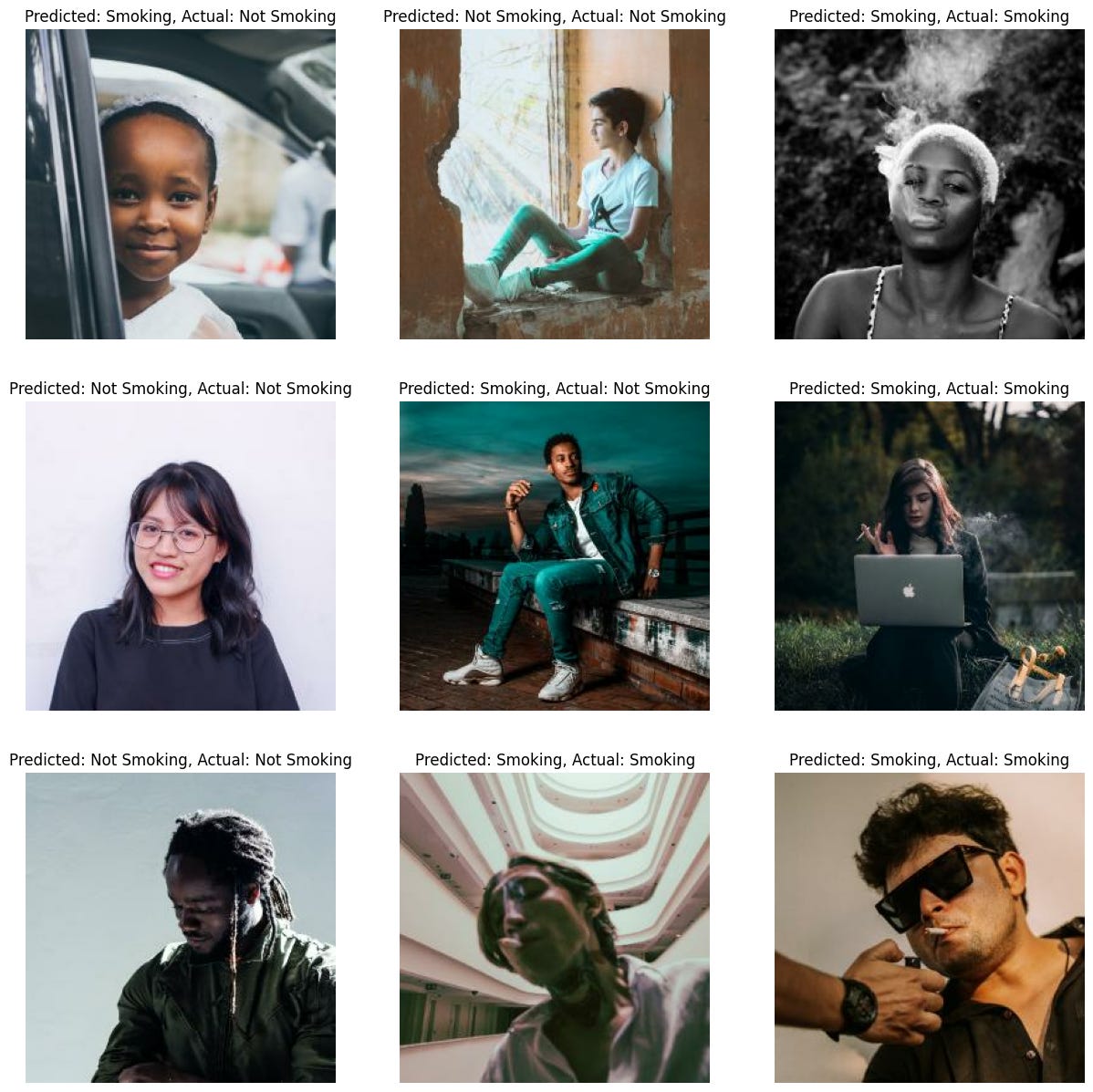

After about ~30 iterations and roughly 37 training attempts, I managed to strike a sweet spot with the model – achieving a respectable 70% accuracy while keeping the error rate low. The following image illustrates the model's performance, successfully predicting 7 out of 9 images correctly.

For those of you with a technical inclination and some Python know-how, feel free to dive into my Colab Notebook here. It's where I've documented the entire process, complete with code and my commentary. If you have a Colab account, you're more than welcome to run the code and see it in action. Alternatively, you can find the project on my GitHub here. A detailed report of my training runs and the insights gleaned from them is available here.

Conclusion

Machine Learning, to me, is akin to alchemy. Like an alchemist, you mix and match different elements, hoping to concoct the perfect solution. It requires patience, an iterative approach, a tolerance for a bit of randomness, and standing on the shoulders of those who came before. These lessons, while rooted in ML, are universally applicable – be it in projects, art, or even music composition.

What's your alchemy? Whether it’s in technology, art, cooking, or any other field, I’d love to hear about your experiences of mixing, matching, and experimenting. Share your stories in the comments!

🌀